Helpful Hints on Developing a User Friendly Database

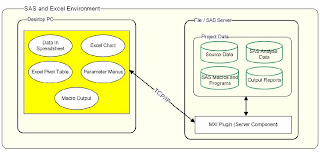

Developing an effective database application requires an interface that is easy for the user. This paper will explore the features of SAS /AF and methodologies of building a successful database. It combines user interface suggestions for the front end while also suggesting back end SCL , SQL and data step logic that makes the software efficient to program and to operate. The majority of the examples are technical tips but there are also shared lessons learned from collaborating with end users which prove to be very important in creating an effective application. Overview There are many solutions for creating a data entry system ranging from a simple Excel spreadsheet to a sophisticated Oracle database. Each set of technologies works well for a specified task. This paper will explore a database containing clinical information used in regulatory submission. SAS /AF is very suitable for this since all analysis work for clinical data requires SAS . The scenario of this particular proj...